Chain Rule and Backpropagation Simplified

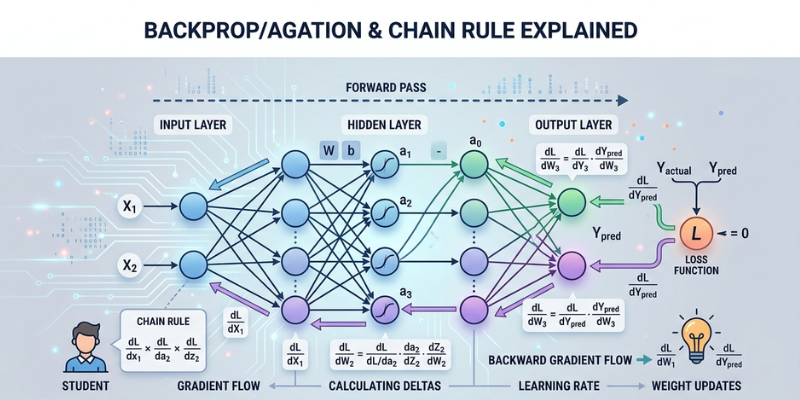

Deep learning models can recognize images, understand speech, and even generate text. Behind these impressive systems lies an important mathematical idea called the chain rule. This concept helps neural networks learn from mistakes and improve predictions over time. Understanding the chain rule and backpropagation becomes easier when we connect them to simple learning processes.

When a neural network makes a prediction, it compares the result with the correct answer. The model then adjusts itself step by step to reduce errors. This adjustment process is known as backpropagation. It applies the chain rule from calculus to determine the contribution of each component of the network to the error. If you want to build a strong foundation in machine learning concepts, you can explore a Data Science Course in Trivandrum at FITA Academy to strengthen your practical understanding effectively.

What is the Chain Rule

The chain rule is a mathematical method used to calculate derivatives when functions are connected together. In straightforward language, it allows us to grasp how a slight modification in one value influences another value across several stages.

Imagine a neural network as a chain of connected operations. Each layer passes information to the next layer. If the final prediction is wrong, the model needs to know which layer caused the error and by how much. The chain rule makes this possible by tracking the influence of each layer backward through the network.

This process is important because neural networks often contain thousands or even millions of parameters. Without the chain rule, updating all these values accurately would be extremely difficult.

Understanding Backpropagation

Backpropagation is the learning mechanism used in neural networks. It helps the model improve after every prediction. The procedure begins when the network determines the difference between the expected output and the real answer.

The network then moves backward layer by layer to calculate gradients. A gradient shows how much a parameter should change to reduce the error. Parameters that contribute more to mistakes receive larger adjustments.

This learning cycle repeats many times during training. As the model keeps adjusting its parameters, predictions gradually become more accurate. Backpropagation allows deep learning systems to improve efficiently without manually changing each value.

Why the Chain Rule Matters in Neural Networks

Neural networks contain several layers connected in sequence. Each layer transforms data before passing it to the next one. Since every layer depends on previous layers, errors must be traced backward carefully.

The chain rule helps calculate the contribution of every layer to the final prediction error. This calculation ensures that updates happen correctly across the network. Without this method, training deep neural networks would become slow and unreliable.

Another reason the chain rule is important is efficiency. Modern AI models process huge amounts of data. The chain rule allows gradient calculations to happen quickly, making large-scale training practical for real-world applications. If you are interested in learning how neural networks optimize predictions in practical projects, you can consider joining a Data Science Course in Kochi to gain hands-on experience in this field.

A Simple Example of Backpropagation

Suppose a neural network predicts the price of a house. The actual price is higher than the prediction. The model now needs to understand why the prediction was incorrect.

Backpropagation begins by measuring the prediction error. Then the chain rule calculates how much each neuron affected that error. The network adjusts weights and biases slightly to reduce future mistakes.

After many training cycles, the model learns patterns more effectively. Predictions become closer to actual values because the network continuously updates itself based on feedback.

This process is similar to how humans learn from mistakes. We analyze what went wrong, make adjustments, and improve performance over time.

The chain rule and backpropagation are the backbone of deep learning systems. The chain rule helps calculate how errors move through connected layers, while backpropagation uses these calculations to improve model performance. Together, they allow neural networks to learn efficiently from data.

For beginners, understanding these concepts is an important step toward mastering artificial intelligence and machine learning. Once the basics become clear, advanced topics such as deep neural networks and optimization techniques become much easier to understand. If you plan to build expertise in AI technologies and practical analytics, you can take a Data Science Course in Pune to develop industry-ready skills with greater confidence and clarity.

Also check: Understanding Descriptive Statistics in Data Science

- SEO

- Biografi

- Sanat

- Bilim

- Firma

- Teknoloji

- Eğitim

- Film

- Spor

- Yemek

- Oyun

- Botanik

- Sağlık

- Ev

- Finans

- Kariyer

- Tanıtım

- Diğer

- Eğlence

- Otomotiv

- E-Ticaret

- Spor

- Yazılım

- Haber

- Hobi